Operant conditioning is a learning process that results in the association of a behavior with a reward or punishment. To put it simply, we learn that actions have consequences and adapt our behavior accordingly. The two major protocols for operant conditioning are reinforcement and punishment. Even though we will extensively discuss all the operant conditioning protocols in a dedicated article, it is important to highlight what positive and negative reinforcement is and isn’t.

All reinforcing protocols, both positive and negative, aim to strengthen a behavior. This means that the characterization of a protocol as positive or negative does not correlate with the type of reinforcement used; positive reinforcement does not mean reward and negative reinforcement does not mean punishment. On the contrary, these terms reflect whether the reinforcer is added or removed by the experimenter.

Consequently, positive reinforcement is achιeved when a reward is provided after a behavior, to strengthen the expression of this behavior. For example, when a rodent presses a lever, food is provided and the rodent learns to press the lever more. On the other hand, negative reinforcement happens through the removal of a noxious stimulus, in order to enhance the behavior. For example, when a rodent presses a lever, a loud noise that was present in the background stops. Again, the rodent learns to press the lever more.

Positive reinforcement experiments are carried in the operant conditioning apparatus. This is a large box that typically contains one or more levers that the animal learns to press in order to receive the reward. Additional features of the device is a dispenser, that is used to deliver the reward. Rewards can range from food and water to drugs with euphoric and reinforcing properties, such as narcotics.[1]

In this article, we will focus on operant conditioning using positive reinforcement and examine which brain regions are implicated in this learning process, what parameters should be regulated during the experiments and what kind of questions can be addressed using.

Which Brain Regions are Involved in Operant Conditioning with Positive Reinforcement?

Positive reinforcement works through reward, which according to the traditional scientific dogma is governed by the neurotransmitter dopamine. Several lines of evidence support this theory. To begin with, drugs with reinforcing properties, such as the narcotics heroin and cocaine, hijack the dopaminergic circuit and increase dopamine release in the brain.[2] Furthermore, electrical stimulation of the medial forebrain bundle (MFB), which contains the mesolimbic ventral tegmental area (VTA) to nucleus accumbens (NAc) dopaminergic projections, increases intracranial self stimulation, an experimental measure of reward.[3]

There is growing evidence that the reward system is much more complicated and relies on several neurotransmitter circuits and brain regions. Research indicates that the coordinated function of glutamatergic, GABAergic, cholinergic and serotonergic neurons is indispensable for the expression of an intact reward behavior. These neural circuits form a cortico-basal ganglia-thalamo-cortical loop, which apart from the VTA and NAc, contains the substantia nigra, the prefrontal cortex (PFC), the hippocampus, the amygdala, the thalamus and more. All these brain areas are involved in different aspects of the reward process, which describe the “wanting”, “liking” and learning phases of reward. [1]

An interesting 2012 study assessed the learning aspect of the reward process. They used the reinforcing drug methamphetamine and assessed its action in three different brain areas, the VTA, the NAc and the ventral hippocampus, a key area for learning. They proved that direct administration of the reinforcing drug methamphetamine in each of these areas produces a conditioned place preference in rats, highlighting the importance of the ventral hippocampus and the hippocampus-VTA loop in the reward process.[4]

But which of the aforementioned areas have been directly linked to positive reinforcement?

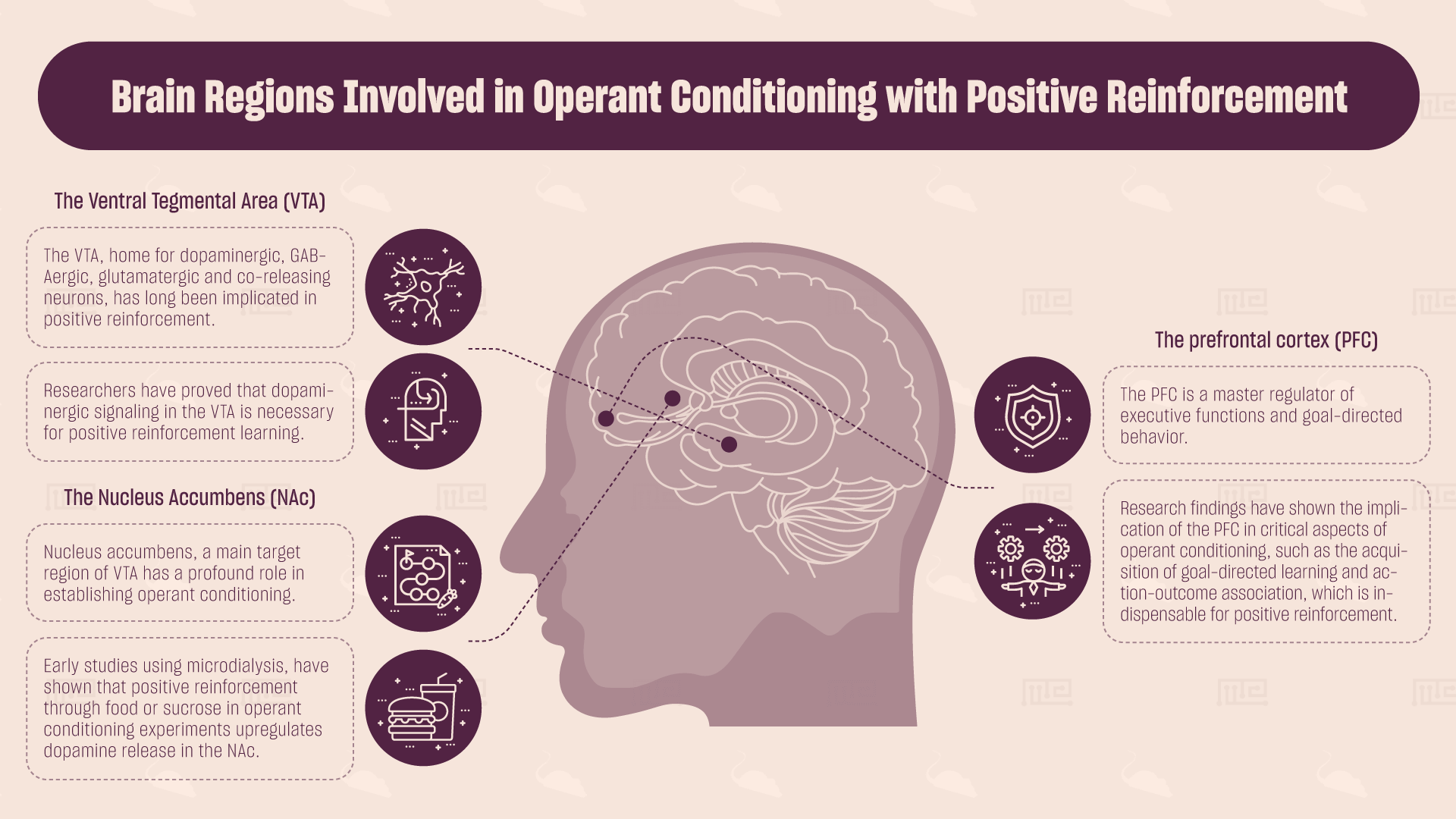

The Ventral Tegmental Area (VTA)

The VTA, home for dopaminergic, GABAergic, glutamatergic and co-releasing neurons, has long been implicated in positive reinforcement. In a recent study, researchers have shown that transient optogenetic activation of VTA glutamatergic neurons or their synaptic terminals in the NAc drives positive reinforcement in mice, in a dopamine-independent manner.[5] Furthermore, blockade of muscarinic acetylcholine receptors by intracranial administration of antagonists directly into the VTA blocked the establishment of food-rewarded operant conditioning in rats, indicating the importance of this area for positive reinforcement.[6] Finally, using a similar methodology and a dopamine receptor antagonist, researchers have proved that dopaminergic signaling in the VTA is necessary for positive reinforcement learning.[7]

The Nucleus Accumbens (NAc)

Nucleus accumbens, a main target region of VTA has a profound role in establishing operant conditioning. Early studies using microdialysis, a method to directly measure neurotransmitter release in the brain, have shown that positive reinforcement through food or sucrose in operant conditioning experiments upregulates dopamine release in the NAc.[8][9] Additionally, neurotransmitter circuits within the NAc, such as the cholinergic and glutamatergic, have been associated with positive reinforcement. Even though the exact biological mechanism remains elusive, studies have shown that pharmacological antagonism of cholinergic and glutamatergic receptors, by local administration of drugs that block their function, disrupts positive reinforcement learning and leads to behavioral deficits, compared to sham control treated animals. [10][11]

The Prefrontal Cortex (PFC)

The PFC is a master regulator of executive functions and goal-directed behavior. Furthermore, it is a target of the VTA. Experimental results from lesion studies, optogenetics and pharmacological studies converge on the implication of the PFC in critical aspects of operant conditioning, such as the acquisition of goal-directed learning and action-outcome association, which is indispensable for positive reinforcement.[12] However, the role of the PFC in positive reinforcement has not been fully characterized so far, as evidence suggests a differential role of PFC depending on the reinforcer used in the study.[13] Thus, additional systematic studies are needed in order to understand in depth how the PFC participates in the establishment and execution of positive reinforcement and why its intact function is indispensable for positive reinforcement.

Other Regions

Several other regions have been shown to participate in positive reinforcement. Using optogenetics, lesion studies and local intracranial administration of drugs, researchers have proved the implication of the laterodorsal tegmental nucleus (LDTg),[14] the hippocampus,[15] the dorsal amygdala,[16] and the substantia nigra pars compacta [17] in operant conditioning learning. As more sophisticated methodologies are being developed, the field of positive reinforcement is constantly growing, providing exciting new findings on the brain circuit that orchestrates this complex behavior.

Positive Reinforcement Protocols

As mentioned above, positive reinforcement occurs by associating a specific behavior, such as a lever pressing or a nose poke, with the administration of a reward. In contrast with Pavlovian learning, positive reinforcement requires the spontaneous expression of the behavior by the test subject first, which is then strengthened by the experimenter using rewards.

Early in the history of positive reinforcement experiments, researchers understood the importance of the schedule of reinforcement on the operant conditioning learning process and success. The schedule of reinforcement is the rule that governs the delivery of the reinforcer in response to the expression of the desired behavior. According to the schedule of reinforcement used, different types of positive reinforcement experiments can be conducted. Reinforcement may be continuous, meaning that each time the subject performs the action a reward is provided, or it can be intermittent, following a specific rate of reinforcement.

All positive reinforcement protocols are based on the same experimental procedure. The rodent is placed in the operant conditioning apparatus and is provided with a reward when it presses the lever, according to the schedule of reinforcement. The rate of response, which corresponds to the frequency of responses in a given time period, is scored. Moreover, researchers usually construct the cumulative curve, a graph that presents the total number of responses during the whole experimental process. The slope of the cumulative curve also functions as a measure of the rate of response.

In the following section, we will discuss in greater detail the existing protocols for positive reinforcement and present their advantages and disadvantages.

- Continuous reinforcement involves the delivery of the reinforcer every time the desired behavior occurs. An example of continuous reinforcement is providing a food reward every time the rodent presses a lever. Continuous reinforcement protocols lead to relatively quick learning, however, extinction also occurs very quickly. Extinction reflects the reverse process of conditioning, during which the behavior that has been previously reinforced no longer leads to reward. For example, in a positive reinforcement protocol, when the behavior no longer results in a reward, the rodent will gradually stop performing this action and the behavior will be extinct. The rate of extinction is an important aspect of reinforcement learning and corresponds to the strength of the learning process.

- Fixed-ratio reinforcement is an intermittent reinforcement protocol that involves the delivery of the reinforcer after the behavior has been expressed for a specific number of times. For example, a food reward is provided after 10 lever presses. This type of reinforcement may lead to increasing response rate, meaning that the rodent will press the level more and more in order to receive a maximum reward.

- Fixed-interval reinforcement is similar to the fixed-ratio schedule, but the reinforcer is provided only after a specific time period has elapsed, independently of how many times the behavior was performed during the time. For example, a researcher may use an interval period of 30 seconds. In this case, the rodent is rewarded with a food pellet for the first time is presses the lever after at least 30 seconds have passed from the previous reward. No matter how many times the rodents presses the lever, it will not get the reward unless the interval period has been completed. After the interval period has elapsed, the rodent will be rewarded the first time it presses the lever. This reinforcement protocol leads to a different pattern of behavior, as the rodent usually presses the lever more in the final part of the interval period, while presses the lever less in the first part of the interval period, immediately after the reinforcer has been provided.

- Variable-ratio reinforcement and variable-interval reinforcement instruct the delivery of the reinforcer after a varied number of responses or varied time period respectively. For example, a rodent may receive the reward initially after 1 lever pressing, then after 2 and then after 5. Similarly, in the variable-interval reinforcement protocol, the reward is delivered in response to the first lever pressing after 10 seconds, then 20 seconds and then 50 seconds intervals.

It is not necessary that the ratio or interval will be increasing. The experimenter may choose an interchanging protocol, where the ratio or interval will increase in the beginning and then decrease, or even completely random protocol of reinforcement, which will provide rewards in various ratios and intervals. The unpredicted nature of the variable reinforcement protocols leads to a more steady response, because the rodent cannot know when it will receive the next reward, so it expresses the behavior without significant pauses during the inter-reward periods.

Variable reinforcement protocols have high response rates and low extinction rates, but are often avoided in the early steps of conditioning because the establishment of the association is faster when using a continuous reinforcement protocol. On the other hand, even though continuous reinforcement protocols lead in a quicker establishment of the desired behavior, they may easily result in a satiety effect, meaning that the test subject may lose interest on the reward, or take it for granted, eventually having a negative impact on the reinforcement process. To circumvent this problem, researchers most of the times gradually shift from the continuous to a partial reinforcement protocol after the initial establishment of the desired behavior. Overall it is important to remember firstly that the continuous protocol is better than the intermittent protocols for the initial phase of the reinforcement learning and secondly that the variable protocols are more efficient than the respected fixed protocols.

What Kind of Questions Can be Addressed Using Positive Reinforcement?

Positive reinforcement experiments are used to answer a variety of scientific questions. One major question is the neurobiological substrate of positive reinforcement and operant conditioning. Technological advances, such as optogenetic manipulation of specific neurons in specific brain areas, nowadays allow a more precise in vivo characterization of the brain circuitry involved in this process. Looking in greater detail, we can manipulate brain function with an unprecedented spatial and temporal resolution, in order to unveil the structural and functional determinants of reward and positive reinforcement.

Another main area of research concerns drugs and pharmacological substances with reinforcing properties. Studying either well-known drugs of abuse, such as ethanol and nicotine, or newly-synthesized pharmacological compounds, it is of paramount importance for the advance of the field of addiction and drug abuse to understand the implicated molecular, genetic and environmental mechanisms. How is addiction established? How can we treat addiction and prevent relapse? How can we achieve reversal learning of existing habits? Which molecular pathways are implicated in these processes? To answer these questions we need animal models of addiction and positive reinforcement is a prominent one.

Even though the list of positive reinforcement applications is long, it is necessary to mention once more the use of positive reinforcement as a training method to strengthen desired behaviors. Learning with positive reinforcement has been effective in a wide range of species, fish, birds, mice and monkeys. Moreover, due to its gentle nature, operant conditioning with positive reinforcement has been used in human research and especially in children with cognitive disabilities, to enhance cooperation with the physician or caregiver and provide better healthcare.[18][19]

Conclusions

All in all, positive reinforcement is a powerful and versatile behavioral paradigm that can be used with a variety of experimental protocols to address scientific questions. Positive reinforcement is conserved among several species, ranging from fish and pigeons to rodents and mammals, a fact that highlights its importance and deep foundations in animal physiology and behavior. Additionally, it is highly relevant to human behavior, as our everyday life behavior is a direct result of our response to positive reinforcers, such as feelings of joy, love or even money. Compared to punishment, positive reinforcement represents a kinder type of learning process, yet equally efficient, thus is a desirable method to be implemented in humans. Furthermore, partial reinforcement is more relevant to everyday life than continuous reinforcement, since we rarely get rewarded every single time we perform an action. Consequently, more research must be done to further elucidate the mechanisms that regulate positive reinforcement and the brain areas that participate in this behavior.

When using positive reinforcement in the lab, it is important to remember that the schedule of reinforcement can have a major influence on how quickly the behavior is learned, how frequently the behavior is expressed and how long it can persist after the removal of the reinforcer.

References

- Schultz, W. (2015). Neuronal reward and decision signals: from theories to data. Physiological reviews, 95(3), 853-951.

- https://www.drugabuse.gov/publications/teaching-packets/neurobiology-drug-addiction/section-iv-action-cocaine/7-summary-addictive-drugs-activate-reward

- Carlezon Jr, W. A., & Chartoff, E. H. (2007). Intracranial self-stimulation (ICSS) in rodents to study the neurobiology of motivation. Nature protocols, 2(11), 2987.

- Keleta, Y. B., & Martinez, J. L. (2012). Brain circuits of methamphetamine place reinforcement learning: The role of the hippocampus‐VTA loop. Brain and behavior, 2(2), 128-141.

- Yoo, J. H., Zell, V., Gutierrez-Reed, N., Wu, J., Ressler, R., Shenasa, M. A., … & Hnasko, T. S. (2016). Ventral tegmental area glutamate neurons co-release GABA and promote positive reinforcement. Nature communications, 7, 13697.

- Sharf, R., McKelvey, J., & Ranaldi, R. (2006). Blockade of muscarinic acetylcholine receptors in the ventral tegmental area prevents acquisition of food-rewarded operant responding in rats. Psychopharmacology, 186(1), 113-121.

- Sharf, R., Lee, D. Y., & Ranaldi, R. (2005). Microinjections of SCH 23390 in the ventral tegmental area reduce operant responding under a progressive ratio schedule of food reinforcement in rats. Brain research, 1033(2), 179-185.

- McCullough, L. D., Cousins, M. S., & Salamone, J. D. (1993). The role of nucleus accumbens dopamine in responding on a continuous reinforcement operant schedule: a neurochemical and behavioral study. Pharmacology Biochemistry and Behavior, 46(3), 581-586.

- Sokolowski, J. D., Conlan, A. N., & Salamone, J. D. (1998). A microdialysis study of nucleus accumbens core and shell dopamine during operant responding in the rat. Neuroscience, 86(3), 1001-1009.

- Cousens, G. A., & Beckley, J. T. (2007). Antagonism of nucleus accumbens M2 muscarinic receptors disrupts operant responding for sucrose under a progressive ratio reinforcement schedule. Behavioural brain research, 181(1), 127-135.

- Myal, S., O’Donnell, P., & Counotte, D. S. (2015). Nucleus accumbens injections of the mGluR2/3 agonist LY379268 increase cue-induced sucrose seeking following adult, but not adolescent sucrose self-administration. Neuroscience, 305, 309-315.

- Ostlund, S. B., & Balleine, B. W. (2005). Lesions of medial prefrontal cortex disrupt the acquisition but not the expression of goal-directed learning. Journal of Neuroscience, 25(34), 7763-7770.

- Faccidomo, S., Salling, M. C., Galunas, C., & Hodge, C. W. (2015). Operant ethanol self-administration increases extracellular-signal regulated protein kinase (ERK) phosphorylation in reward-related brain regions: selective regulation of positive reinforcement in the prefrontal cortex of C57BL/6J mice. Psychopharmacology, 232(18), 3417-3430.

- Steidl, S., & Veverka, K. (2015). Optogenetic excitation of LDTg axons in the VTA reinforces operant responding in rats. Brain research, 1614, 86-93.

- Cheung TH, Cardinal RN (2005): Hippocampal lesions facilitate instrumental learning with delayed reinforcement but induce impulsive choice in rats. BMC Neurosci 6:36.

- Nishijo, H., Ono, T., Nakamura, K., Kawabata, M., & Yamatani, K. (1986). Neuron activity in and adjacent to the dorsal amygdala of monkey during operant feeding behavior. Brain research bulletin, 17(6), 847-854.

- Rossi, M. A., Sukharnikova, T., Hayrapetyan, V. Y., Yang, L., & Yin, H. H. (2013). Operant self-stimulation of dopamine neurons in the substantia nigra. PLoS One, 8(6), e65799.

- Watling, R., & Schwartz, I. S. (2004). Understanding and implementing positive reinforcement as an intervention strategy for children with disabilities. American Journal of Occupational Therapy, 58(1), 113-116.

- Manassis, K., Young, A., & Jellinek, M. S. (2001). Adapting positive reinforcement systems to suit child temperament. Journal of the American Academy of Child & Adolescent Psychiatry, 40(5), 603-605.

- Wise, R. A., & McDevitt, R. A. (2018). Drive and reinforcement circuitry in the brain: origins, neurotransmitters, and projection fields. Neuropsychopharmacology, 43(4), 680.

- https://courses.lumenlearning.com/wsu-sandbox/chapter/operant-conditioning/

- http://www.neuroanatomy.wisc.edu/coursebook/neuro7(2).pdf